We pulled Claude Code into a product design meeting (and accidentally became its subagents)

The best explanation of AI subagents and swarms is being in one.

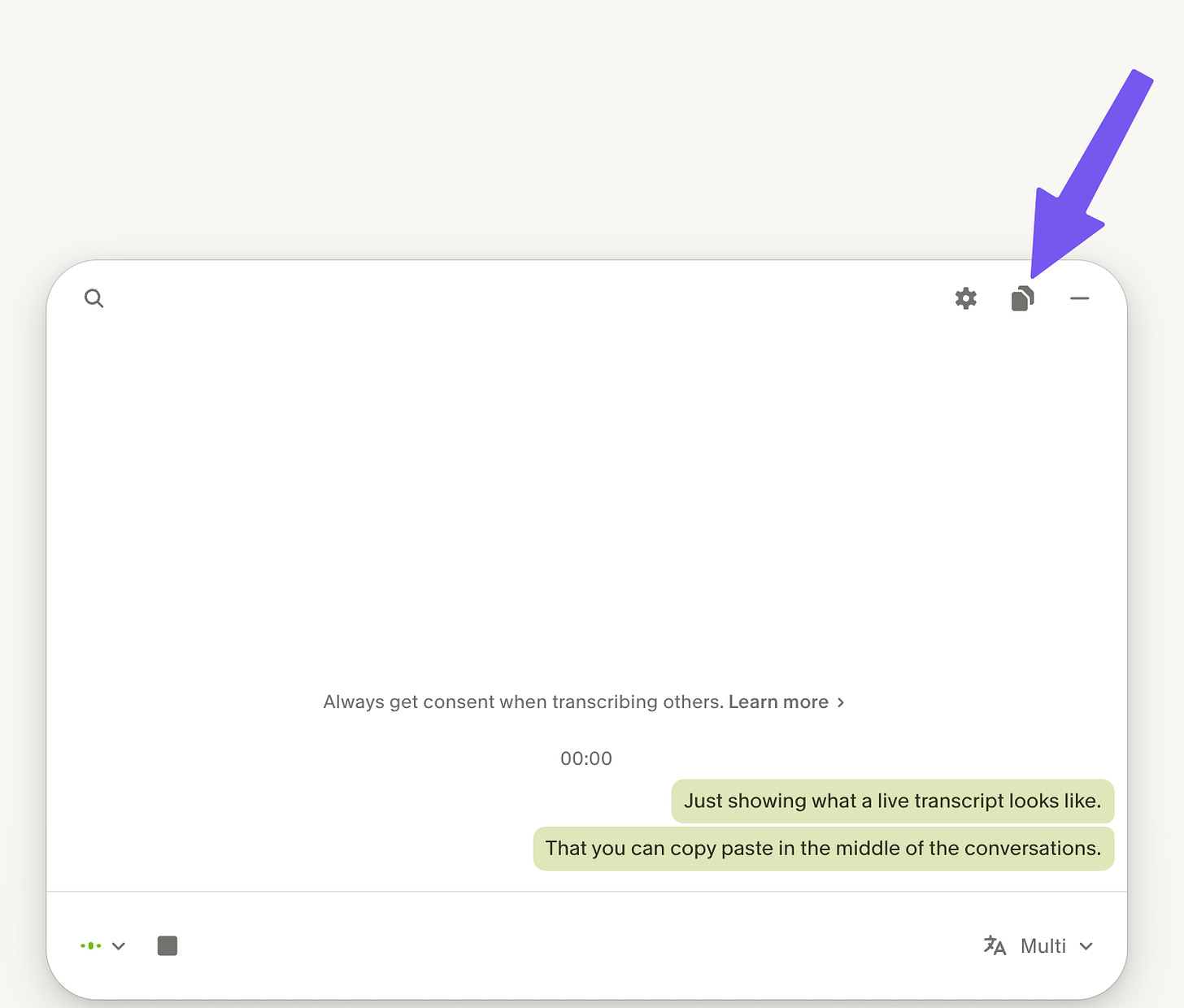

Maxim and I sat down to brainstorm a notification design problem this weekend. We wanted Claude's help, so we started holding down the dictation button while we talked to each other.

Claude would think, ask us a question, and we’d go off and discuss. Then we’d dictate our answer back.

“How time-sensitive is the notification?”

“How many results might pile up before someone checks?”

“What if we had no notification?”

In between Claude’s questions, we’d have a lengthy conversation. We didn’t transcribe everything we said, just the bottom line. When it felt like one of us was about to land an important point, we’d hold down the transcription button, and hit enter.

Claude got our creative juices flowing. It kept us moving, and got us to think bigger and articulate a framework for our decision, faster. Claude was an excellent addition to the meeting. (Not everything Claude said was brilliant, but neither are most things I say.)

I realized this wasn’t my first time pulling AI into a live meeting. When Laurel was traveling and we had to make some complicated decisions, we did a Zoom call. We really wanted Claude’s help, so:

I screenshared Claude

I turned on Granola

We talked about it

We paused, pasted our transcript into Claude, and read the answer

We talked about it

We paused, pasted our transcript into Claude, and read the answer

etc.

Full disclosure: this was super manual. Each time I had to paste the transcript into TextEdit so I could truncate just the transcript from where we left off. But it worked.

Another option is to have the call on your laptop speakers (not headphones), and hold down the dictation button (I use Wispr Flow these days).

This also works one-sided: a friend in sales told me about a call that was going south. Mid-conversation he copied his Granola transcript into AI, that gave him one discovery question to ask. It changed the tone of the entire conversation, and he eventually closed the deal.

Imagine how valuable this could be:

Live coaching during a sales call

Live coaching during a hard conversation

Two people having a hard conversation and both want AI present (like couples therapy for the smaller things where it doesn’t make cost benefit to hire someone)

I’d love for this to be less clunky:

Granola, Grain, Fathom, etc. folks: can you make the live transcript available through your MCP integration? Like live_conversation_latest_since(timestamp) or something, so AI can chime in and has full conversation context. (Or leave the tool response hanging until a natural pause in the conversation, and then return the incremental transcript.)

And you, AI voice mode providers: what if AI could just accept a Zoom/Meet/Teams invite link and join in?

I’m hoping these exist and I’m late to the party. If you personally use one, reply and let me know!

Maxim and I became Claude Code’s subagents

Think about what was actually happening in that brainstorm:

Claude would ask us a question (aka prompt us)

Maxim and I would talk for a while (aka reasoning)

Then we’d distill the bottom line and dictate it back to Claude (aka tool response)

Claude would process it, prompt us with a new question (main agent orchestrating us)

Sounds a lot to me like we were AI’s subagents. I don’t mean this in a philosophical Matrix sense: this exercise deepened my understanding of subagents, by noticing I was acting as one.

Remember, a “subagent” is when one AI agent (aka chat thread) opens a blank, fresh AI agent (aka chat thread), to get an answer without wasting its own context window tokens. The subagent gets a request (a prompt, written by the original agent) and gives back a response (the bottom line answer, returned to the original agent). If you want to sound smart in a meeting, the fancy term for this is “uncorrelated context window.”

In our brainstorm, Claude’s prompt to us was its multiple choice questions (e.g. “how time-sensitive is the notification?”) Our subagent response was: “Not urgent, but important. Can have a delay of a few hours but not more” Claude processed that and called us again with a new prompt.

Btw, what I love about Cursor is you can see this very easily. When a model calls a subagent, you can click into it to see the original prompt, actions, and response to the main agent (another way to see this in action was I had Claude Code write and workshop standup comedy).

“Agent swarms” are just subagents with friends

Let’s throw a wet blanket on the buzzword: a “swarm” is just multiple subagents running at the same time.

I haven’t encountered a killer use case for AI swarms. As with humans, throwing more parallel agents at a problem feels limited. As Fred Brooks put it, "The bearing of a child takes nine months, no matter how many women are assigned."

Even if swarms are incremental to subagents, they’re still a cool idea.

Imagine for a moment if the “AI swarm agents” were just humans. What would that look like?

AI sends out a batch of surveys

AI facilitates a large-scale brainstorm, cross-pollinates ideas, synthesizes the results

A 360 review orchestrated by AI

AI collecting product feedback at scale

In that sense, are UserTesting, Mechanical Turk, and SurveyMonkey the original “swarms”? It’s a fun thought, and makes the buzzword more accessible.

Taking the “sub” out of subagents

As a subagent, I resent the implication of hierarchy. Subagents aren’t really “sub.” They’re just participants in a conversation, just like users and tools.

Maxim and I brought connection to the real world, a lifetime of context, taste, and judgment. Claude brought knowledge and extra creative brain cells. We all read the messages, thought about them, and gave our best response.

When I pull AI into my real work, the buzzwords lose their bite, and my AI product sense gets a little stronger. This started as pulling AI into a brainstorm about notifications. Turns out it was also the clearest explanation of subagents I’ve found: just being one.

I suspect it’s only a matter of time before Claude introduces its own AI meeting assistant. A system that listens to meetings in real time and automatically brings in relevant context, documents, and memory could fundamentally change how meetings work.